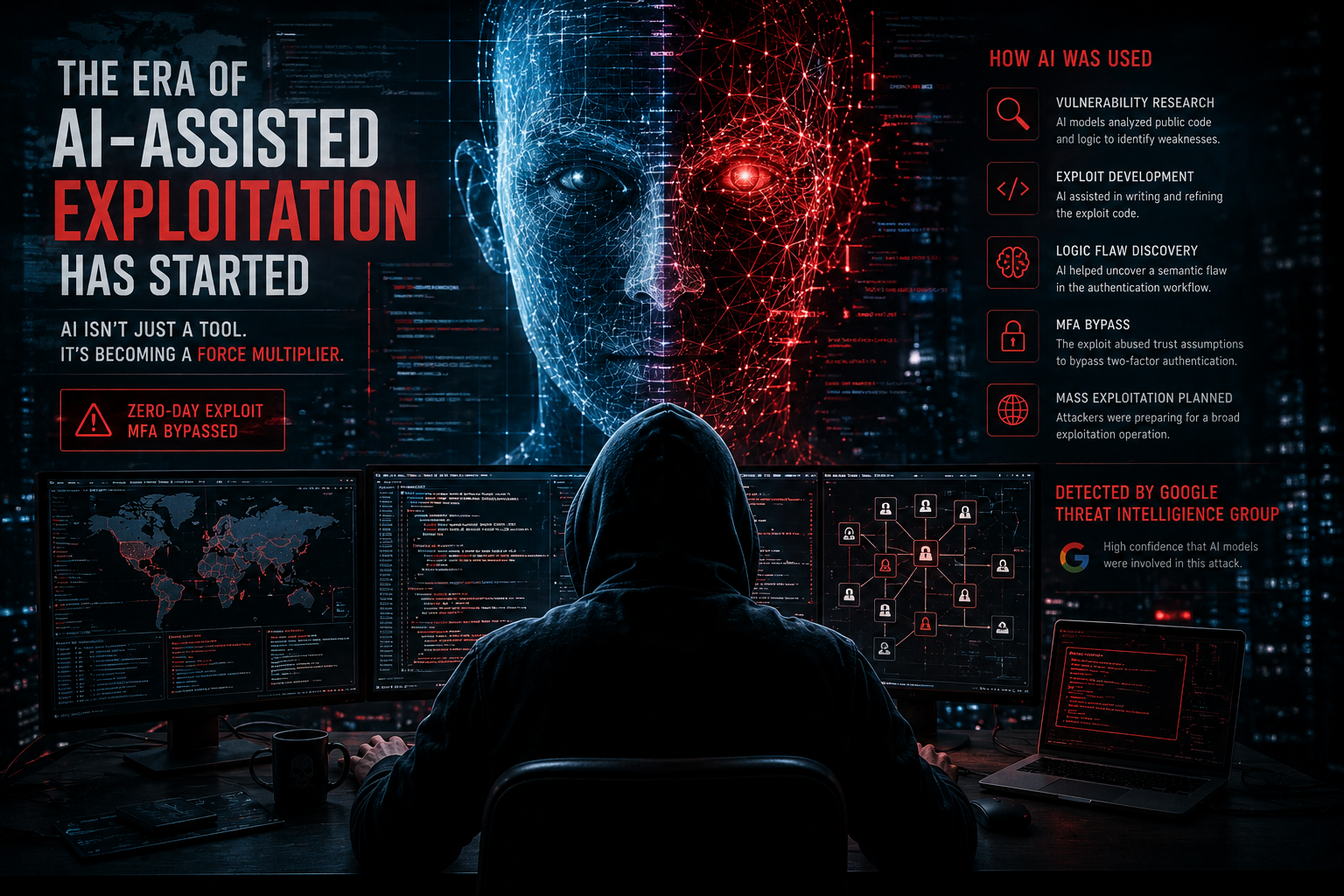

The Era of AI-Assisted Exploitation Has Started

For years, cybersecurity professionals have debated what artificial intelligence would eventually mean for offensive security. Some believed AI would mostly become a defensive tool used for detection and automation. Others warned it would eventually accelerate cybercrime itself.

Key Takeaway

AI does not need to replace attackers to fundamentally change cybersecurity — it only needs to accelerate them.

AI is now part of exploit development

This week, Google’s Threat Intelligence Group disclosed what may be the first documented case of attackers using AI assistance to help discover and weaponize a real-world zero-day exploit designed to bypass two-factor authentication.

That sentence alone should get the attention of every security team.

AI-assisted exploits look different

Researchers reportedly found several indicators inside the exploit code itself. The Python script allegedly contained unusually structured educational comments, textbook-style formatting patterns, and even a hallucinated CVSS severity score — something security researchers would not normally include in a functioning exploit.

In other words, the exploit looked partially written by a large language model.

Related Service

Learn more about SecureProbe penetration testing services →Semantic logic flaws are now targetable

The reported flaw itself was not a simple coding mistake or buffer overflow. It was a semantic logic flaw involving hardcoded trust assumptions inside an authentication workflow.

Historically, those kinds of vulnerabilities often required significant human reasoning to discover because they involve understanding how systems are supposed to behave rather than simply identifying broken syntax or unsafe memory handling. That line is now beginning to blur.

AI changes exploit economics

For decades, one of the natural barriers protecting organizations was the amount of time, expertise, and effort required to discover and weaponize vulnerabilities. Skilled exploit development has traditionally been a difficult discipline requiring deep technical understanding and significant research time.

AI changes the economics of that process. Even if AI does not independently create perfect exploits, it dramatically accelerates research, experimentation, analysis, debugging, and iteration.

The attack timeline is shortening

Modern attackers already move quickly. Ransomware groups, access brokers, and state-sponsored actors operate with increasing efficiency and scale. AI introduces the possibility of reducing the distance between discovering a vulnerability and actively exploiting it in the wild.

That may ultimately become the defining cybersecurity story of the next decade.

Human expertise still matters

At the same time, it is important to avoid exaggeration. AI is not replacing skilled hackers overnight. Human creativity, persistence, and operational knowledge still matter enormously.

Attackers still need infrastructure, objectives, targeting, and real-world understanding of systems and environments. But AI does not need to replace attackers to fundamentally change cybersecurity. It only needs to accelerate them.

Real-World Risk

AI-accelerated exploit development reduces the time between vulnerability discovery and real-world exploitation, raising urgency for defenders.

AI-assisted exploitation takeaways

Related Articles

Need help validating real-world risk?

SecureProbe provides penetration testing, vulnerability assessment, and attack surface analysis services designed to identify practical security risks and provide clear remediation guidance.

Request an Assessment